Interpretable Credit Risk Scoring

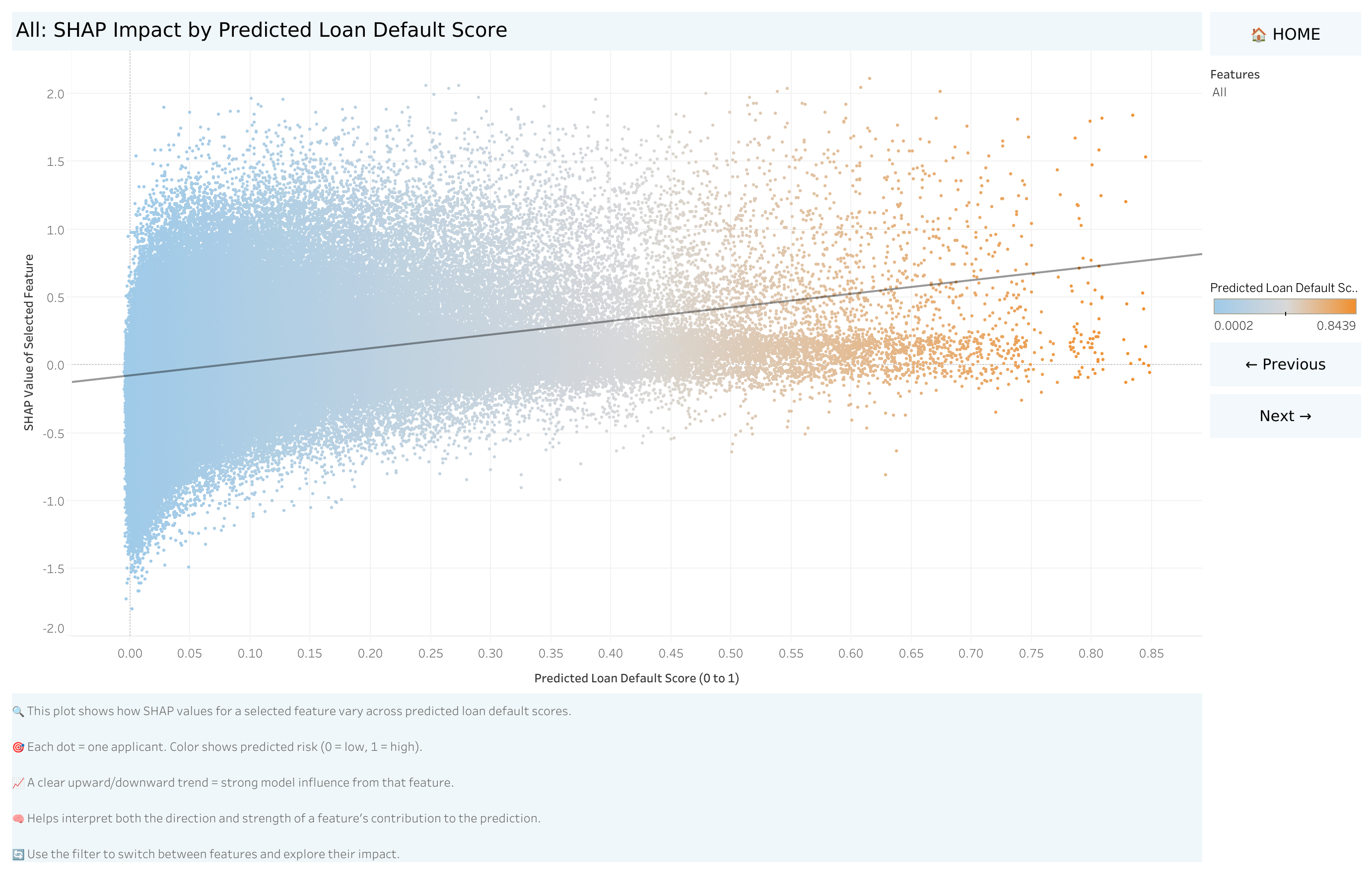

A machine learning pipeline that predicts loan default probability across 300K+ applicants — then makes every prediction explainable to risk managers, auditors, and product teams using SHAP and Tableau.

Many loan applicants — particularly those with limited or no formal credit history — are denied financing not because they're high risk, but because traditional scoring models can't assess them. The Home Credit dataset represents exactly this population: 300K+ applicants where standard bureau data is thin or absent.

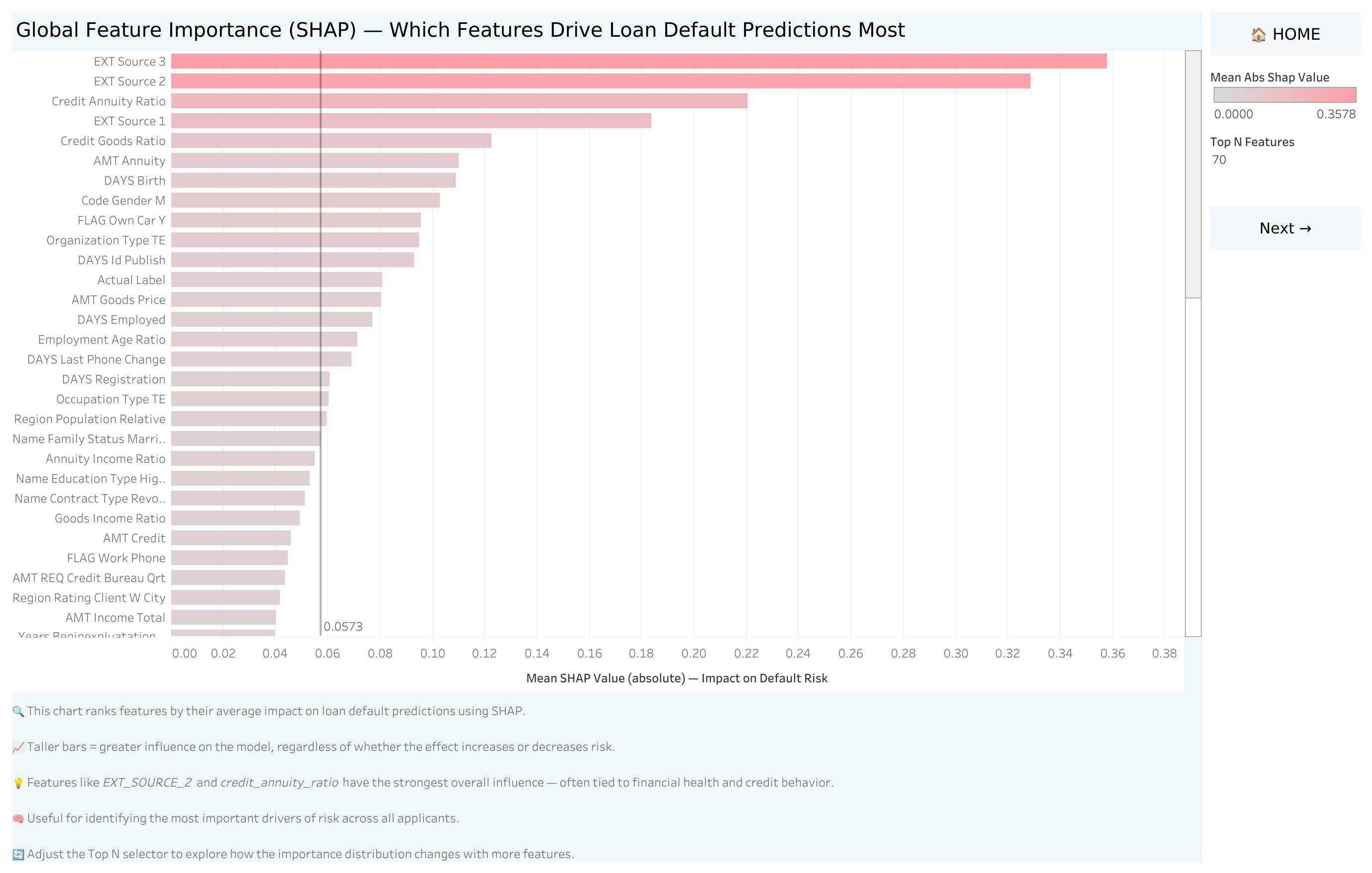

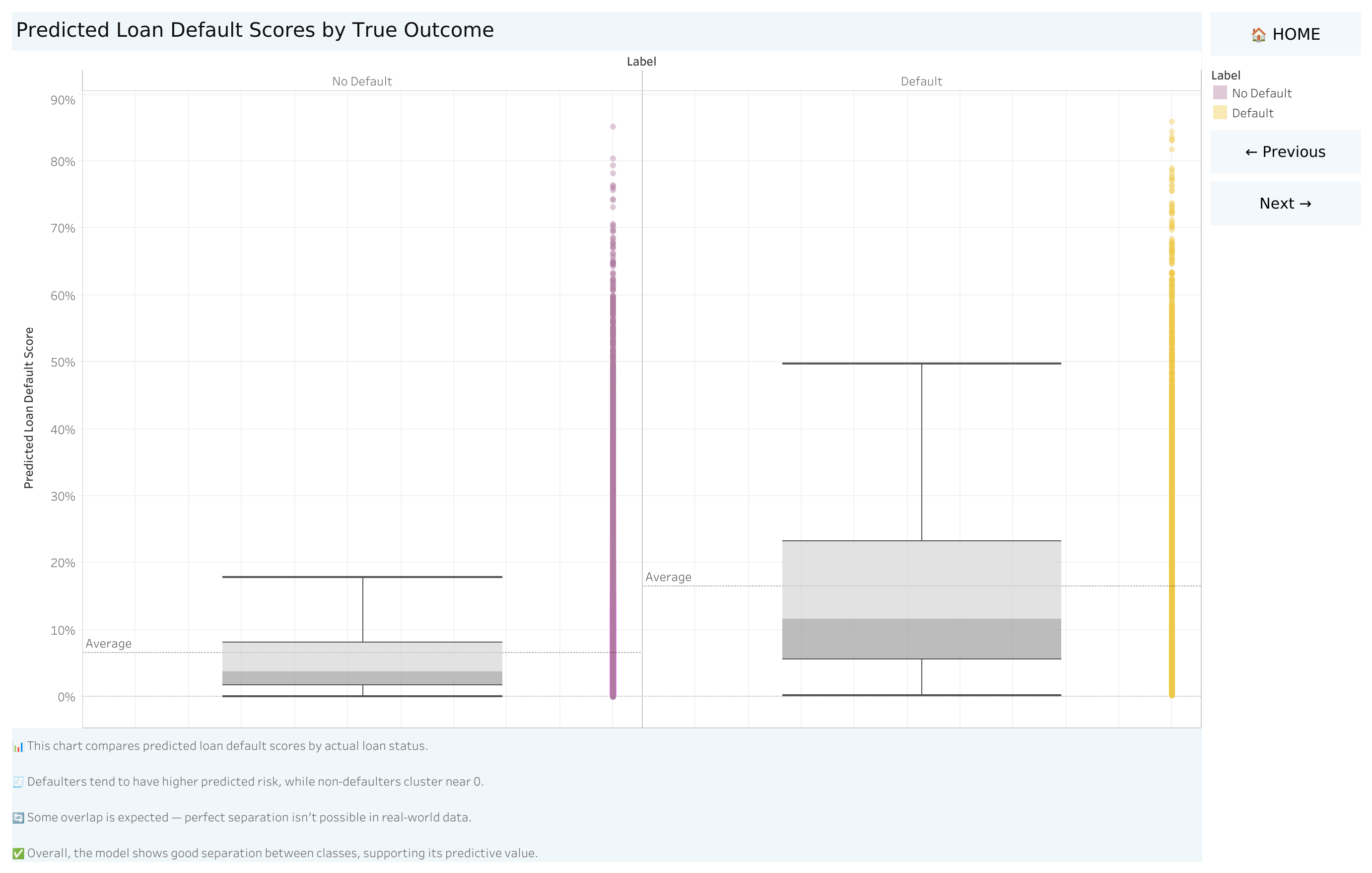

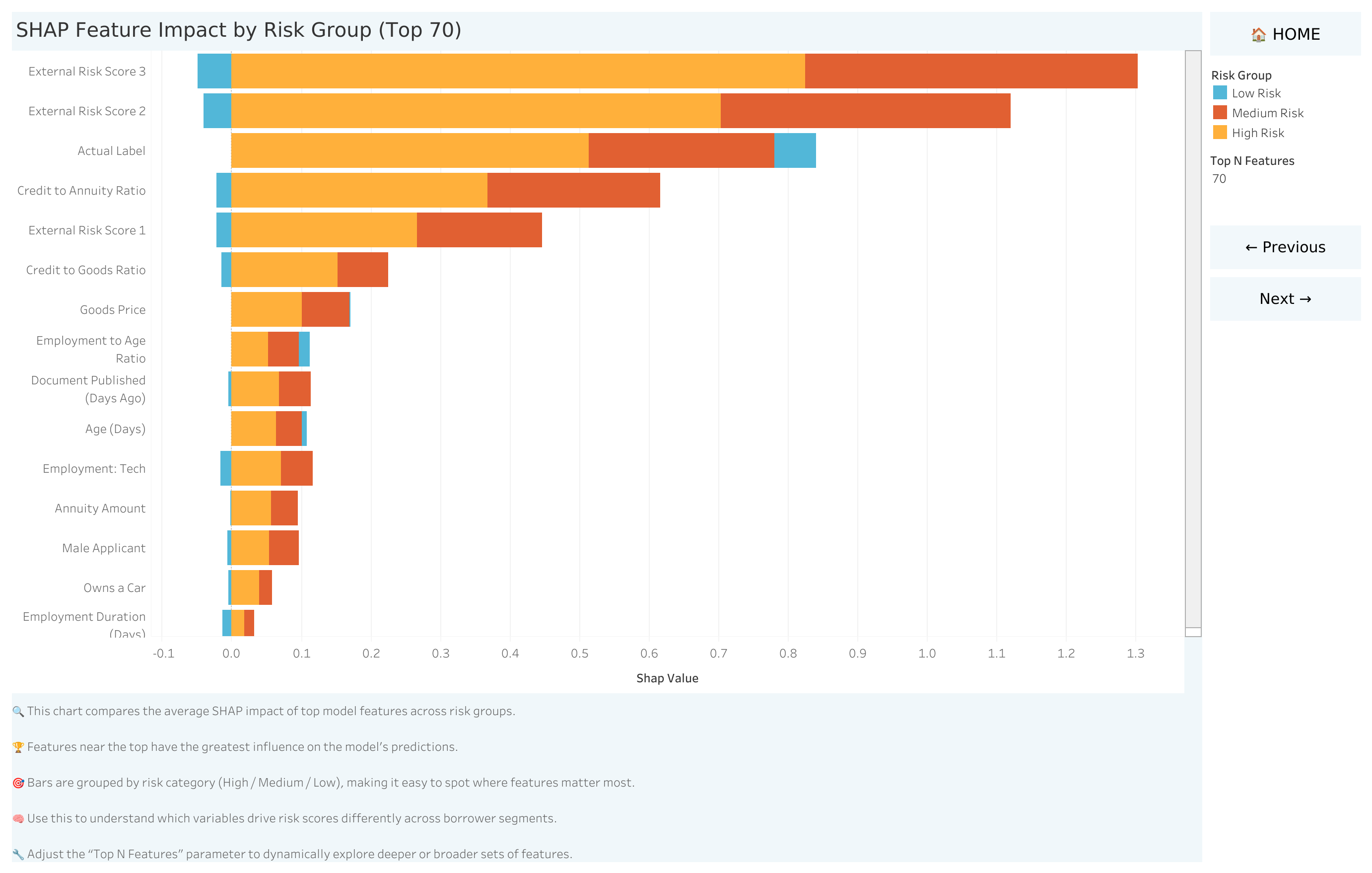

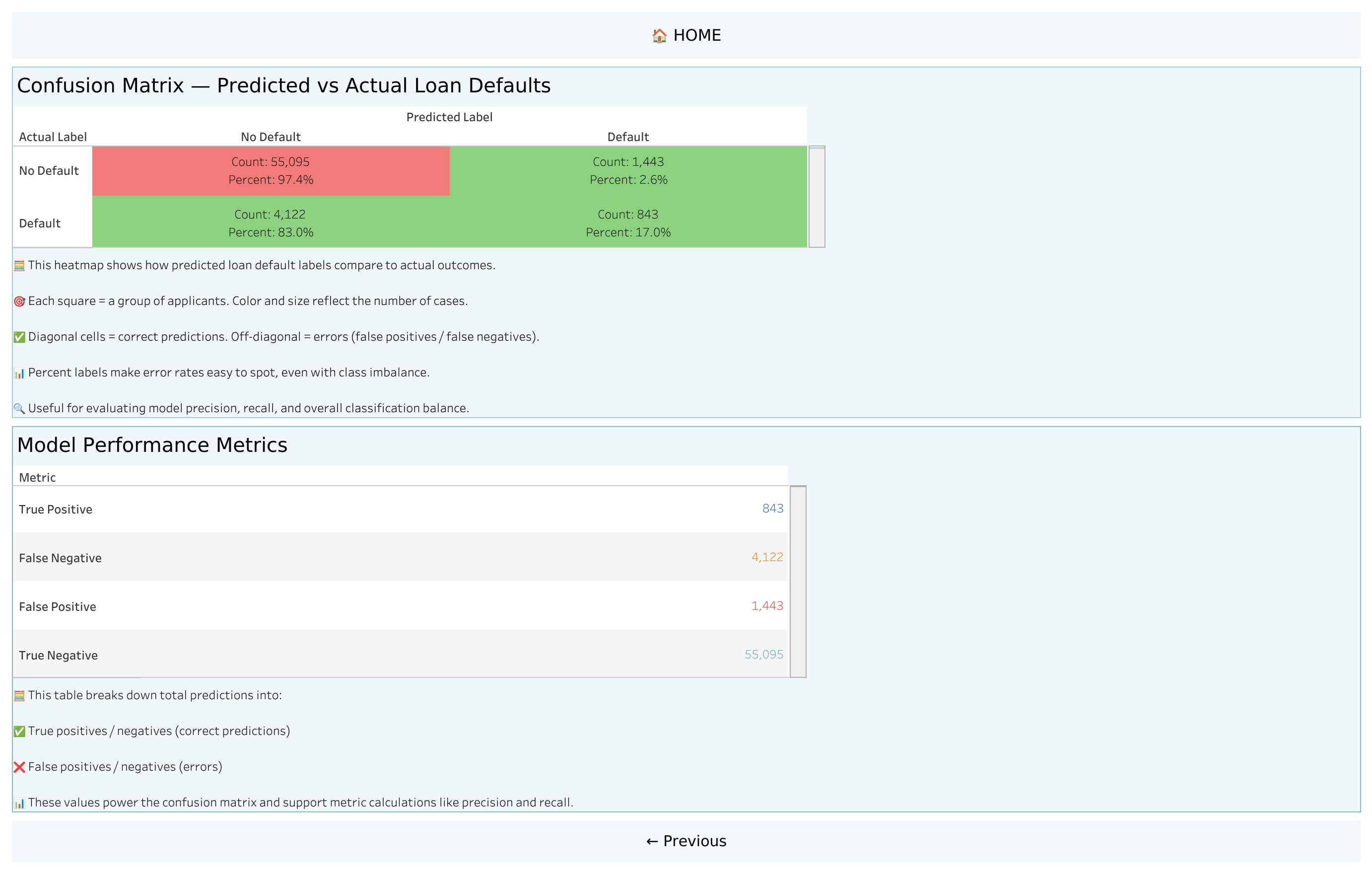

The challenge isn't just prediction accuracy. In regulated financial environments, a model that says "denied" without explanation is unusable. Risk managers need to know why. Auditors need documentation. Product teams need actionable thresholds. A black-box ML model, no matter how accurate, fails all three.

This project demonstrates that interpretability isn't a trade-off against accuracy — it's a requirement for deployment. A model that risk teams don't trust won't be used, regardless of its AUC score.

The SHAP + Tableau layer transforms a machine learning output into a decision support tool that risk managers can act on, auditors can review, and executives can understand. In a regulated lending environment, that's the difference between a proof of concept and a production-ready system.